Tracing NVIDIA's Tech Roadmap: GTC Milestones and the AI Strategic Moat

The NVIDIA GPU Technology Conference (GTC) has evolved from a niche graphics event into the "Super Bowl of AI." Over the past six years, NVIDIA has successfully pivoted from being a component vendor to a full-stack infrastructure provider. By analyzing the trajectory from the Ampere architecture to the upcoming Rubin platform, we can decipher the "Gospel of Jensen" and identify the industrial moats that make NVIDIA nearly unassailable in the current market.

Ampere, Hopper, Blackwell, Rubin: NVIDIA's Generational AI Roadmap

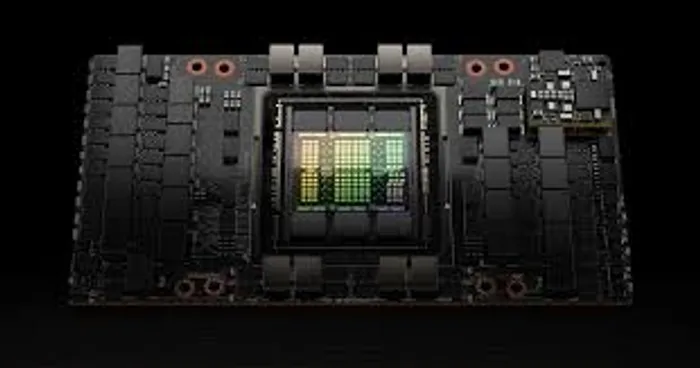

The trajectory of NVIDIA's market supremacy began in earnest with the 2020 launch of the Ampere (A100) architecture, which introduced Multi-Instance GPU (MIG) technology, allowing a single chip to be partitioned for multiple tasks. This was followed by the 2022 Hopper (H100) architecture, a pivotal moment that introduced the Transformer Engine. By hardware-accelerating the specific mathematical patterns of Large Language Models (LLMs), NVIDIA didn't just build a faster chip; they built the only viable "currency" for the Generative AI revolution. This era marked NVIDIA's transition from a graphics component vendor to the foundational architect of the global AI data center, capturing about 70% to 90% of the enterprise AI market and establishing a hardware-software synergy that competitors are still struggling to replicate.

In 2024 and 2025, the Blackwell (B200) and Blackwell Ultra (B300) architectures shifted the industry's focus from individual chips to "rack-scale" systems. The Blackwell generation utilized dual-die packaging to pack 208 billion transistors into a single GPU, while the GB200 NVL72 system effectively turned an entire server rack into one massive, liquid-cooled supercomputer. This phase addressed the "Energy Wall" by providing up to 25x better energy efficiency for inference tasks compared to previous generations. For investors, this signaled a massive capital shift toward liquid cooling infrastructure and advanced power management, as the physical constraints of data centers became the new bottleneck for AI scaling, creating a secondary market of specialized infrastructure providers that are now essential to NVIDIA's deployment.

As of March 2026, the industry is transitioning to the Vera Rubin (R100) architecture, which represents a fundamental redesign of the AI stack. Rubin moves beyond the Grace CPU to the custom Vera CPU, paired with HBM4 (High Bandwidth Memory) and a 1.6T networking fabric. This generation is purpose-built for "Agentic AI"—autonomous systems that do not just generate text but actively execute complex industrial and scientific workflows. The Rubin platform's performance leap reflects NVIDIA's strategic move to stay ahead through an aggressive "one-year cadence," forcing competitors into a perpetual cycle of playing catch-up. This roadmap ensures that by the time a rival catches up to Hopper or Blackwell, NVIDIA has already redefined the standard for the next generation of autonomous computing.

_7b4af2ff1773390108758.png?format=webp&width=700)

Why Is NVIDIA's Moat Unassailable?

NVIDIA's primary competitive moat is a three-headed hydra: Software (CUDA), Networking (NVLink), and Supply Chain Supremacy. With over 4 million developers locked into the CUDA ecosystem, switching to a competitor requires a prohibitive overhaul of a decade's worth of software libraries. Furthermore, NVIDIA's acquisition of Mellanox allowed them to control the networking fabric; their proprietary NVLink interconnects GPUs at speeds far exceeding industry-standard PCIe, ensuring that an NVIDIA cluster is always more efficient than a heterogeneous one. Finally, by securing the lion's share of TSMC's CoWoS packaging and HBM capacity through massive prepayments, NVIDIA has effectively built a "capital moat" that starves competitors of the manufacturing resources needed to scale, cementing their role as the indispensable landlord of the global AI revolution.

Tianhao Xu is currently a financial content editor, focusing on fintech and market analysis. Previously, he worked as a full-time forex trader for several years, specializing in global currency trading and risk management. He holds a master’s degree in Financial Analysis.

Latest Articles

Stay ahead of the market.

Get curated U.S. market news, insights and key dates delivered to your inbox.

Comments

No comments yet