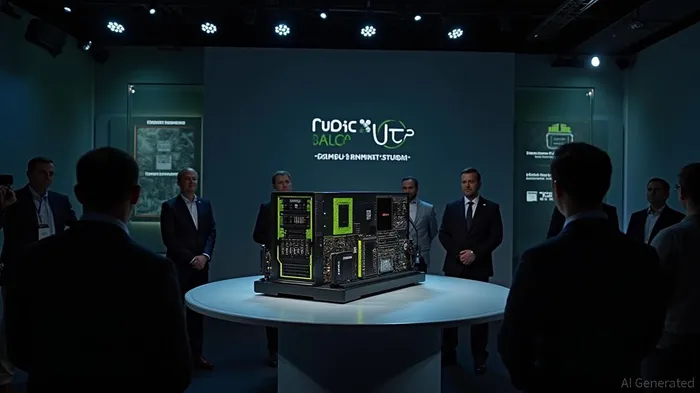

Nvidia Unveils Rubin CPX: A GPU for Long-Context Inference Tasks

ByAinvest

Tuesday, Sep 9, 2025 12:51 pm ET2min read

NVDA--

The Rubin CPX is built on NVIDIA's next-generation Rubin architecture and is designed to handle the compute-intensive "context phase" of AI inference. This phase processes vast inputs like entire codebases or video files, which can cause significant performance bottlenecks. By separating this phase from the memory-intensive "generation" phase, NVIDIA claims to improve throughput, reduce latency, and enhance overall resource utilization [1].

The Rubin CPX delivers 30 petaFLOPs of NVFP4 compute and is equipped with 128GB of GDDR7 memory, making it a cost-effective solution for compute-bound tasks. It also features three times faster attention acceleration compared to the current-generation GB300 NVL72 system, which is crucial for efficiently processing long data sequences [1].

NVIDIA is offering the Rubin CPX as part of the Vera Rubin NVL144 CPX platform, a high-performance disaggregated serving solution housed in a single rack. This platform combines 144 Rubin CPX GPUs for context processing, 144 standard Rubin GPUs for the generation phase, and 36 Vera CPUs, delivering a staggering 8 exaFLOPs of total NVFP4 compute power. The system also includes 100TB of high-speed memory and 1.7 petabytes per second of memory bandwidth [1].

The Rubin CPX is set to be available at the end of 2026, and NVIDIA has confirmed that it will offer a separate, attachable rack of CPX nodes for customers looking to upgrade existing installations [1].

NVIDIA's move to create specialized hardware for specific AI tasks signals a maturation of the market, moving beyond simply adding raw power to a single chip design and towards a more sophisticated, full-stack optimization of the entire AI workflow [1].

The announcement comes at a time when NVIDIA's competitors are still struggling to develop proprietary AI accelerators. While companies like Amazon and Meta are working on their own AI chips, many have faced challenges in chip design and development [2].

NVIDIA's disaggregated approach represents a fundamentally different architectural philosophy, allowing it to reinforce its market leadership by creating a chip that is demonstrably better for a specific, high-value task [1].

The Rubin CPX is expected to significantly increase the productivity and performance of AI factories, transforming AI coding assistants and enabling more efficient processing of video content [1].

References:

[1] https://winbuzzer.com/2025/09/09/nvidia-unveils-rubin-cpx-a-specialized-gpu-to-accelerate-long-context-ai-inference-xcxwbn/

[2] https://nvidianews.nvidia.com/news/nvidia-unveils-rubin-cpx-a-new-class-of-gpu-designed-for-massive-context-inference

Nvidia has unveiled the Rubin CPX GPU, designed for long-context inference and context windows larger than 1 million tokens. The CPX is part of Nvidia's Rubin series and is optimized for processing large sequences of context. The result is better performance on tasks like video generation and software development. The Rubin CPX is set to be available at the end of 2026.

NVIDIA has announced the Rubin CPX, a specialized GPU designed to accelerate long-context AI inference, with a focus on processing sequences larger than one million tokens. This new chip, part of NVIDIA's Rubin series, is optimized for tasks such as video generation and large-scale software development, where traditional GPUs struggle to deliver optimal performance.The Rubin CPX is built on NVIDIA's next-generation Rubin architecture and is designed to handle the compute-intensive "context phase" of AI inference. This phase processes vast inputs like entire codebases or video files, which can cause significant performance bottlenecks. By separating this phase from the memory-intensive "generation" phase, NVIDIA claims to improve throughput, reduce latency, and enhance overall resource utilization [1].

The Rubin CPX delivers 30 petaFLOPs of NVFP4 compute and is equipped with 128GB of GDDR7 memory, making it a cost-effective solution for compute-bound tasks. It also features three times faster attention acceleration compared to the current-generation GB300 NVL72 system, which is crucial for efficiently processing long data sequences [1].

NVIDIA is offering the Rubin CPX as part of the Vera Rubin NVL144 CPX platform, a high-performance disaggregated serving solution housed in a single rack. This platform combines 144 Rubin CPX GPUs for context processing, 144 standard Rubin GPUs for the generation phase, and 36 Vera CPUs, delivering a staggering 8 exaFLOPs of total NVFP4 compute power. The system also includes 100TB of high-speed memory and 1.7 petabytes per second of memory bandwidth [1].

The Rubin CPX is set to be available at the end of 2026, and NVIDIA has confirmed that it will offer a separate, attachable rack of CPX nodes for customers looking to upgrade existing installations [1].

NVIDIA's move to create specialized hardware for specific AI tasks signals a maturation of the market, moving beyond simply adding raw power to a single chip design and towards a more sophisticated, full-stack optimization of the entire AI workflow [1].

The announcement comes at a time when NVIDIA's competitors are still struggling to develop proprietary AI accelerators. While companies like Amazon and Meta are working on their own AI chips, many have faced challenges in chip design and development [2].

NVIDIA's disaggregated approach represents a fundamentally different architectural philosophy, allowing it to reinforce its market leadership by creating a chip that is demonstrably better for a specific, high-value task [1].

The Rubin CPX is expected to significantly increase the productivity and performance of AI factories, transforming AI coding assistants and enabling more efficient processing of video content [1].

References:

[1] https://winbuzzer.com/2025/09/09/nvidia-unveils-rubin-cpx-a-specialized-gpu-to-accelerate-long-context-ai-inference-xcxwbn/

[2] https://nvidianews.nvidia.com/news/nvidia-unveils-rubin-cpx-a-new-class-of-gpu-designed-for-massive-context-inference

Stay ahead of the market.

Get curated U.S. market news, insights and key dates delivered to your inbox.

AInvest

PRO

AInvest

PROEditorial Disclosure & AI Transparency: Ainvest News utilizes advanced Large Language Model (LLM) technology to synthesize and analyze real-time market data. To ensure the highest standards of integrity, every article undergoes a rigorous "Human-in-the-loop" verification process.

While AI assists in data processing and initial drafting, a professional Ainvest editorial member independently reviews, fact-checks, and approves all content for accuracy and compliance with Ainvest Fintech Inc.’s editorial standards. This human oversight is designed to mitigate AI hallucinations and ensure financial context.

Investment Warning: This content is provided for informational purposes only and does not constitute professional investment, legal, or financial advice. Markets involve inherent risks. Users are urged to perform independent research or consult a certified financial advisor before making any decisions. Ainvest Fintech Inc. disclaims all liability for actions taken based on this information. Found an error?Report an Issue

Comments

No comments yet