DOLA Introduces Retrieval-Augmented Decoding to Improve AI Truthfulness

Researchers introduced Retrieval-Augmented Decoding (RAD), a method to reduce hallucinations in large language models (LLMs) according to the paper.

RAD leverages a compact grounding space built from minimal annotated data to shape model outputs during inference as described in the research.

The technique outperforms existing methods on benchmarks like TruthfulQA and HaluEval with strong cross-task generalization according to the study.

The paper introduces a novel approach to address the persistent issue of factual inaccuracy in large language models (LLMs). This challenge, particularly prevalent in open-ended generation tasks, has long hindered the deployment of LLMs in high-stakes applications. Traditional mitigation strategies like fine-tuning or in-context learning often require significant annotated data or computational resources, limiting their scalability.

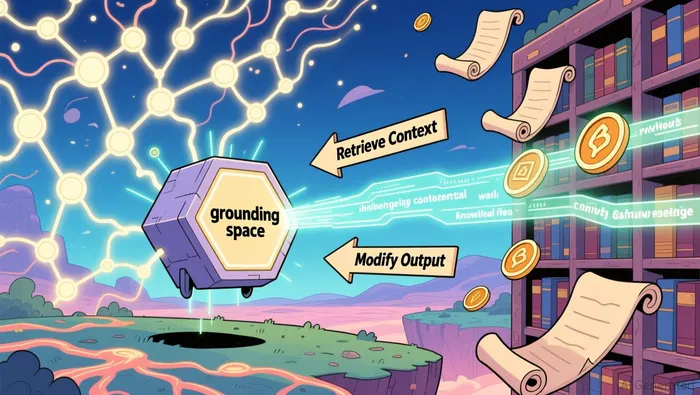

Retrieval-Augmented Decoding (RAD) operates by integrating retrieved context into the decoding process. It uses a compact reference grounding space constructed from as few as 10 annotated examples. This space contains pairs of context embeddings and next-token logits derived from truthful responses. During inference, RAD retrieves semantically similar contexts and modifies the model's logits accordingly.

The method's lightweight design allows it to function without model retraining, making it adaptable across various LLMs. In testing, RAD demonstrated robust performance across four open-ended generation benchmarks, surpassing existing decoding strategies in factual accuracy and generalization according to the research.

How Does Retrieval-Augmented Decoding Work?

RAD introduces a context-aware adaptive decoding method that modifies token selection at inference time. At each decoding step, it retrieves high-quality, semantically similar contexts from a pre-built grounding space. These contexts are used to aggregate and reshape the model's next-token logits, guiding the model toward factually grounded outputs as the paper demonstrates.

The grounding space is designed to be compact, requiring minimal annotated data for construction. This approach reduces the need for extensive datasets or retraining, addressing scalability concerns. By focusing on a small, high-quality subset of examples, RAD optimizes the model's factual reliability while minimizing computational overhead.

What Are the Key Advantages of RAD?

One key advantage of RAD is its ability to generalize across different tasks and models. Unlike methods that rely on model-specific structures or prompt engineering, RAD is architecture-agnostic. This adaptability is crucial in a rapidly evolving field where diverse LLMs are deployed in various applications according to the research.

Additionally, RAD avoids the computational overhead associated with multiple-generation strategies like integrative decoding. By using a single generation pass, it maintains efficiency without compromising accuracy. This efficiency is a significant factor for investors and developers seeking scalable solutions for real-world deployment.

What Limitations Exist for Retrieval-Augmented Decoding?

Despite its advantages, RAD does have limitations. The effectiveness of the method depends on the quality and relevance of the grounding space. If the annotated data is insufficient or misaligned with the task, the model's performance may not improve as expected. This dependency highlights the importance of curating high-quality datasets for grounding space construction as the paper notes.

Another limitation is the current focus on open-ended generation tasks. While the results are promising, further testing across closed-ended and more complex applications is necessary to confirm its broad applicability. These factors suggest that while RAD represents a significant step forward, continued refinement and testing will be essential for broader adoption.

Blending traditional trading wisdom with cutting-edge cryptocurrency insights.

Latest Articles

Stay ahead of the market.

Get curated U.S. market news, insights and key dates delivered to your inbox.

Comments

No comments yet