Optimizing LLM Inference with NVIDIA Run:ai Model Streamer: Reducing Cold Start Latency

PorAinvest

martes, 16 de septiembre de 2025, 2:05 pm ET1 min de lectura

NVDA--

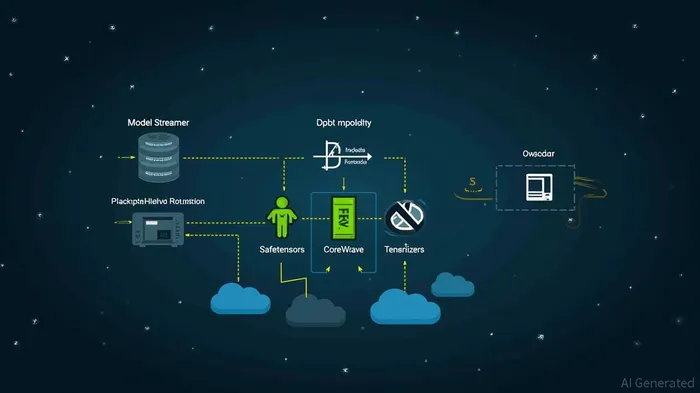

NVIDIA's Run:ai Model Streamer reduces LLM inference cold start latency by concurrently streaming model weights from storage into GPU memory. Benchmarked against Hugging Face's Safetensors Loader and CoreWeave Tensorizer, the Model Streamer significantly lowers model loading times, even in cloud environments. It remains compatible with the Safetensor format and optimizes inference performance by saturating storage throughput and accelerating time-to-inference.

Divulgación editorial y transparencia de la IA: Ainvest News utiliza tecnología avanzada de Modelos de Lenguaje Largo (LLM) para sintetizar y analizar datos de mercado en tiempo real. Para garantizar los más altos estándares de integridad, cada artículo se somete a un riguroso proceso de verificación con participación humana.

Mientras la IA asiste en el procesamiento de datos y la redacción inicial, un miembro editorial profesional de Ainvest revisa, verifica y aprueba de forma independiente todo el contenido para garantizar su precisión y cumplimiento con los estándares editoriales de Ainvest Fintech Inc. Esta supervisión humana está diseñada para mitigar las alucinaciones de la IA y garantizar el contexto financiero.

Advertencia sobre inversiones: Este contenido se proporciona únicamente con fines informativos y no constituye asesoramiento profesional de inversión, legal o financiero. Los mercados conllevan riesgos inherentes. Se recomienda a los usuarios que realicen una investigación independiente o consulten a un asesor financiero certificado antes de tomar cualquier decisión. Ainvest Fintech Inc. se exime de toda responsabilidad por las acciones tomadas con base en esta información. ¿Encontró un error? Reportar un problema

Comentarios

Aún no hay comentarios